Retrieval-Augmented Generation, or RAG, represents an exciting frontier in artificial intelligence and natural language processing. By bridging information retrieval and text generation, RAG can answer questions by finding relevant information and then synthesizing responses in a coherent and contextually rich way.

What is Retrieval-Augmented Generation (RAG)?

RAG is a method that combines two significant aspects:

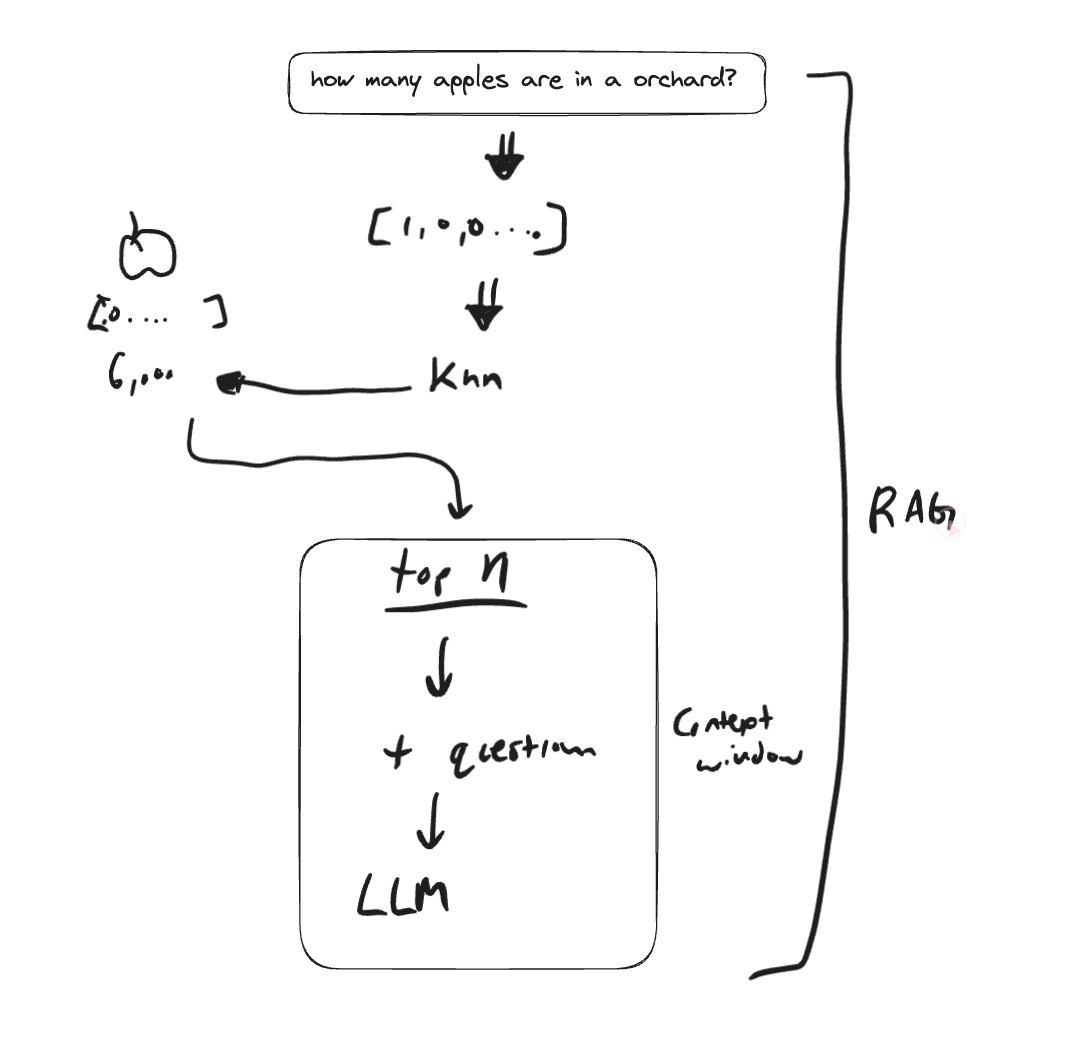

- Information Retrieval: This involves searching through large databases or collections of text to find documents that are relevant to a given query.

- Text Generation: Once relevant documents are found, a model like a Transformer is used to synthesize the information into a coherent and concise response.

RAG models utilize powerful machine learning algorithms to carry out both retrieval and generation tasks.

Why is RAG Important?

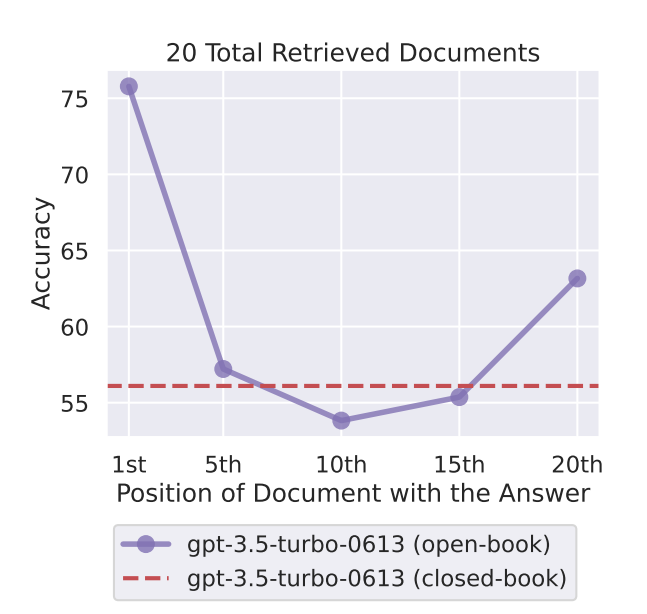

LLMS have limited context windows. The intuitive response is to increase the size of that context window, but researchers at Stanford found that doing so actually doesn't correlate to performance (measured by accuracy).

Models are better at using relevant information that occurs at the very beginning or end of its input context, and performance degrades significantly when models must access and use information located in the middle of its input context.

So in order to exceed this window, we need to use Retrieval Augmented Generation.

Primary Use Cases of RAG

Customer Support

RAG can provide immediate, context-aware responses to customer queries by searching through existing knowledge bases and FAQs.

Summarization

RAG can analyze large documents, identify the most important information, and condense it into a readable summary.

Research Assistance

In academic and corporate settings, RAG can sift through vast amounts of research papers and provide concise insights or answers to specific questions.

Conversational AI

RAG can be employed to build intelligent chatbots that can engage in meaningful dialogues, retrieve relevant information, and generate insightful responses.

Code: Using RAG to Provide Contextual Answers

Here's a code snippet that demonstrates how to use RAG to extract parts of a large document, prompt a question, and generate a conversational answer. This example makes use of the GPT-3.5 model through OpenAI's API.

import json

import requests

key = "API_KEY"

top_n_docs = doc_score_pairs[:5]

# Concatenating the top 5 documents

text_to_summarize = [doc for doc, score in doc_score_pairs]

# prompt as context

contexts = f"""

Question: {query}

Contexts: {text_to_summarize}

"""

content = f"""

You are an AI assistant providing helpful advice.

You are given the following extracted parts of a long document and a question.

Provide a conversational answer based on the context provided.

You should only provide hyperlinks that reference the context below.

Do NOT make up hyperlinks. If you can't find the answer in the context below,

just say "Hmm, I'm not sure. Try one of the links below." Do NOT try to make up an answer.

If the question is not related to the context, politely respond that you are tuned to only answer

questions that are related to the context. Do NOT however mention the word "context"

in your responses.

=========

{contexts}

=========

Answer in Markdown

"""

url = "https://api.openai.com/v1/chat/completions"

payload = json.dumps({

"model": "gpt-3.5-turbo",

"messages": [

{

"role": "user",

"content": content

}

]

})

headers = {

'Authorization': f'Bearer {key}',

'Content-Type': 'application/json'

}

response = requests.request("POST", url, headers=headers, data=payload)

just_text_response = response.json()['choices'][0]['message']['content']

print(just_text_response)Live Example: